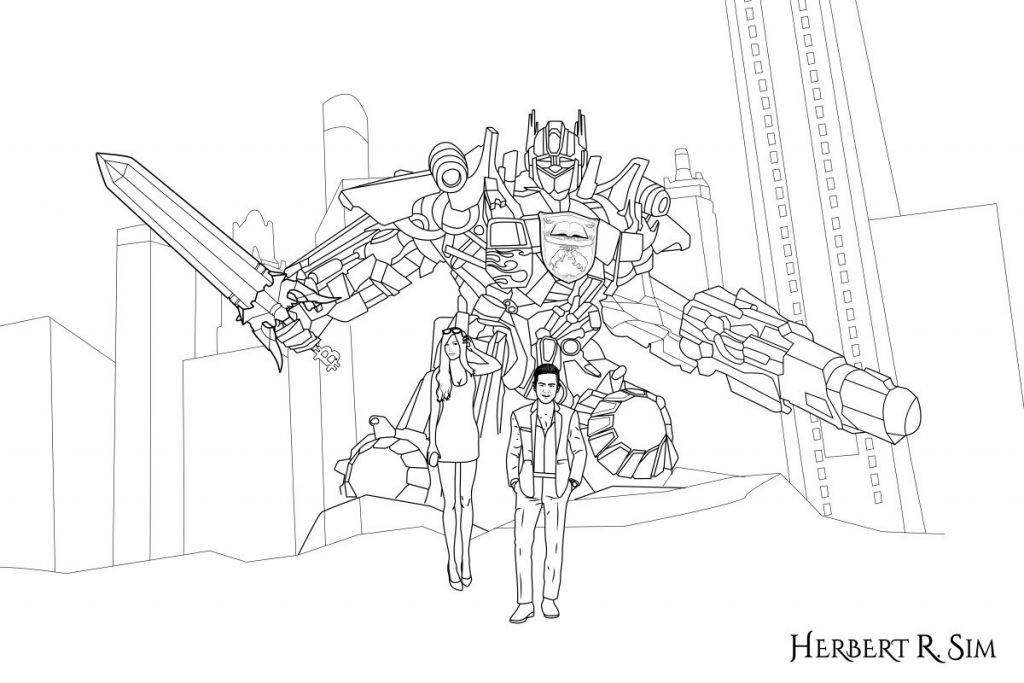

Referencing that of Transformers: Dark of the Moon (2011), I attempt to illustrate ‘Lethal Autonomous Weapons Systems’, portraying Optimus Prime as an artificial intelligence killer robot. And of course, featuring myself, alongside with Vanessa Emily.

——————————————————-

For centuries, weapons and machines deployed in warfare have had direct agency from human will and effort. Flawed design and execution by humans have been the primary causes of problems associated with much of the computerised weapon systems of today. That, however, is fast changing as computer systems with some level of Artificial Intelligence (AI) become increasingly complex and capable. The result being semi-autonomous and autonomous weapons systems capable of functioning with little or no direct human involvement.

The technological aspect aside, from an ethics perspective, the “computerisation” and “robotisation” of warfare is located on a slippery slope, the endpoint of which can neither be predicted nor fully controlled.

Lethal Autonomous Weapons Systems (LAWS) raise important questions the answers to which could well have profound consequences.

LAWS Arms Race

With countries perpetually engaged in strategic competition with each other to gain an edge in their military and security capabilities, the development and deployment of LAWS has the potential to ignite a LAWS arms race worldwide. In today’s complex security landscape with multiple actors, a LAWS arms race would not just be limited to the militaries of countries big and small, but also non-state actors like terrorist groups, weapons traffickers and rogue scientists.

The inexorable and dangerous logic of an arms race is such that technological superiority demands that a country acquire new weapons systems before its adversaries, who in turn will compete to attain or maintain that same superiority. And in doing so, there is a collective propelling of a LAWS arms race.

A major concern with the increasing autonomy of weapons systems is the concomitant increase in uncertainty as to how such weapons will perform in new situations, be it the field testing range, a wargame scenario, or an actual battlefield. The truism that ‘no battle plan survives first contact with the enemy’ is a reflection of the inherent complexity and unpredictably of warfare or any other crisis situation. Similarly, the best laid plans and controls for an autonomous weapon system are wont to go wrong when deployed on the field.

Mala in Se

According to ethicists, an essential concept in just war theory (morally justifiable war) and international humanitarian law is that certain activities such as genocide and the use of biological weapons are evil in and of themselves, or what Roman philosophers called “mala in se”. The argument is that autonomous machines lack discrimination, empathy and the capacity to make the proportional ethical judgments necessary for weighing civilian casualties against achieving mission objectives.

Is letting a machine gun pick targets on its own accord a good idea? Is it moral to delegate life and death decisions to machines, which cannot be held responsible for their actions? And if not the machine, who will bear the responsibility – the general, political leader or the weapons developer? There are many unanswered questions and ethical quandaries to navigate.

The response to these questions is the notion that future machines will have the capacity for ethical discrimination and be able to make better moral choices than human soldiers. However, this is just speculative projection for now. And if it does come true, there are far larger and more profound issues to address such as the line between man and machine, and whether machines should be given the status of personhood.

——————————————————-

If you study the illustration closely, you’ll notice that Optimus Prime is holding onto the ‘Blade of Bitcoin‘. Also, he has the logo of Crypto Chain University seared across his chestplate.

In the background depicts the apocalyptic scenario of both Singapore and its iconic Marina Bay Sands (on the left), and Malaysia and its iconic Petronas Twin Towers (on the right).

——————————————————-

The Way Forward?

The United Nations, its Office for Disarmament Affairs in particular, has raised pertinent questions about the emergence of LAWS and called into question the adequacy of existing measures to implement the rules of armed conflict to autonomous weapon systems. The main purpose of the rules of armed conflict is to protect civilians from unacceptable harm, which entails there being adequate human accountability at all times. Yet, with LAWS, how can accountability be maintained if humans are no longer involved in the decision to use force?

The Office for Disarmament Affairs stated that the international community need not wait for an autonomous weapon system to fully emerge before adopting measures to mitigate and eliminate unacceptable risk. Even as LAWS are undergoing research and development, it is clear that they merit special consideration in addressing their ethical implications.

International non-government organisation Human Rights Watch has called for governments to pre-emptively ban fully autonomous weapons because of the danger they pose to civilians in armed conflict. In conjunction with the Harvard Law School Human Rights Clinic, the NGO proposed an international treaty that would absolutely prohibit the development, production and use of fully autonomous weapons.

The US Department of Defence release a directive in 2012 titled “Autonomy in Weapons Systems” where it acknowledged the challenges that LAWS would pose. Essentially, the directive requires a human being to be “in-the-loop” when decisions are made about using lethal force. The directive is believed to be the first policy announcement by any country on fully autonomous weapons.

It remains to be seen whether such statements by international bodies, appeals by NGOs and government directives will translate into concrete international laws, government action and widely-adopted norms that adequately address the complex ethics of LAWS. The road ahead for LAWS is a long and winding one, and it is imperative that the journey is guided by an ethical compass.